Finite-State Machines (FSM) are the bread-and-butter of game AI due to their simplicity (both in implementation and theory) and effectiveness. As such, FSMs are the topic of many tutorials and guides. Unfortunately, most of them focus on the States part of FSM. After all, they are called Finite-State Machines, so you expect that states are the critical part.

Well, no. The critical part is the other: transitions.

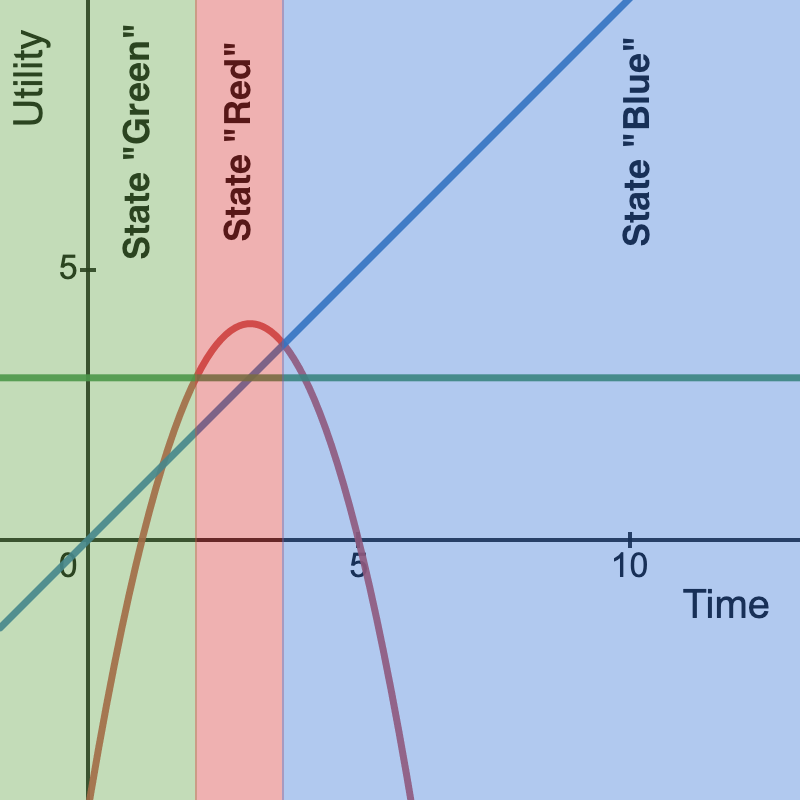

Transitions can make or break your AI independently of how carefully crafted the states are. In other words, intelligence is in change, and the element of change in an FSM is represented by transitions.

Unfortunately, transitions in real-world examples are more complex than in FSMs tutorials. For example, suppose we have a simple character in a shooting game and assume we have three states: shoot, flee, and heal. The first will make the character attack the player, the second will make the character run away from the player, and the third will make the character run toward the nearest healing spot.

That’s sound. However, how do we transition between the states? The simplest thing would be to write three boolean conditions, as usual.

The character will flee when their HPs are less than 50%, and we are close to the player, and they will heal when their HPs go under 25%.

It seems fine at first. But then we realize that there are some ambiguous situations. For example, the character can be near the player with less than 25% HP. Will they flee or not?

Depends. Is the health location closer than the player’s? How much HP does the character have? Are they at 24% or at 1%? Because at 24%, the NPC may risk rushing to the health location, while at 1%, it will be suicide.

There are many decisions here. Unfortunately, none of them is easy to encode with an if-then-else rule. What if we use a smoother approach?

Introducing Utility Functions

So imagine you are working on your game. You lose track of time, and suddenly, you feel uncomfortable. You are hungry. And thirsty. Where do you go? You go to grab a bite by walking to the kitchen, or you get your water bottle inside a fridge on the opposite side? (Yes, you really botched the setup of your working place)

Depends. Are you more hungry or more thirsty? What are the things that you need to address with more urgency? Or, said in another way, what action will provide you with the most utility?

You can imagine having some hidden “utility trackers,” translating every urgency/utility into a numeric value. So that you can make a decision. For instance, you can simply compare the “thirst utility” and “hunger utility” numbers and choose to go toward the greater utility.

Let’s try to code this. I’ll write this in a small Pico8 demo.

First, we set up a small scene with three objects: a burger, a glass of water, and our character. The following code initializes the state of these objects.

| |

Next, we initialize the draw function. This is just basic Pico8 stuff. Don’t worry. It is not required to understand the AI. We are just rendering the three sprites for the hamburger, water, and player, plus writing the value of the hunger and thirst utility trackers.

| |

Now, during the update step, we need to do two things: update the world state and trigger the AI.

| |

The updatestate function is elementary. We increase the player’s hunger and thirst at every frame by a small value. Then, we check if the player is near the burger or the water, and we zero the corresponding bodily sensation (if I eat a burger, I have zero hunger; if I drink water, I have zero thirsts).

Finally, let’s look at the AI.

| |

As you can see, it is pretty simple. We can summarize it in a few steps:

1. Compute the Utility Vector

The Utility Vector maps states and utilities. So, its length is equal to the number of possible states. In our example, we have three states: fetch food, fetch water, and idle. Therefore, our utility vector will contain 3 values: the utility for bringing food (\( u_f \)), the utility for fetching water (\( u_w \)), and the utility of for being still (\(u_i\)).

![WARNING] Remember to normalize your utility functions. Your utility functions should return comparable values. If you measure

thirstin the 0-1 range andhungerin the 10-1000 range, the system will always pick the get food state!

In our example, we use hunger as the utility for fetching food (\( u_f = hunger \)), thirst as the utility for fetching water (\( u_w = thirst \)), and constant 1 as the utility for being still (\( u_i = 1 \)). However, you can use any function you like.

For instance, imagine we want the weather temperature to influence the behavior of the AI. We may wish to tweak the utility so that, in hot environments, we prioritize getting water. We can just add something proportional to the temperature to the utility for fetching water (\( u_w = thirst + k\cdot temperature \)). Simple as that.

ℹ️ Info

The implementation of this tweak could be a good exercise. In addition, you may want to experiment with different functions mapping thirst and temperature to the utility of getting water.

2. Select the state corresponding to the maximum utility

In our example, we use a maxArg function to return the array’s index of the maximum value. This is usually good enough.

3. Run the AI for that state

Once we have the state with the most utility, we run such a state.

Example in Action

You can see the embedded demo here, or use the png cartridge on your environment as a starting point for further experiments.

Final Comments

There are two comments I want to add.

First, utility-based transitions work best when our state diagrams are complete. That is when we allow the AI to jump from any starting state to any destination state. The most evident example is The Sims (Maxis, 2000) , where the Sims can start any action at any time depending on their urges. Of course, it is possible to block a transition (e.g., an NPC in state Foo cannot move to state Bar), but this requires encoding this limitation into the utility function itself (for instance, by dropping the utility of Bar to minus infinity if the NPC is in Foo state). As you can imagine, this can quickly become cumbersome.

Second, you may ask, “isn’t this fuzzy logic”? Yes. We can think of Fuzzy FSMs as a special kind of utility-based AIs. But in my opinion, focusing too much on fuzzy logic can become limiting or make things more complicated (i.e., we don’t need to restrict our utility values to the 0-1 interval).

In my experience, utility-based state machines allowed me to have complex behavior without too much code. As a result, it has been my go-to technique for prototypes.

I hope it may be helpful for you too.